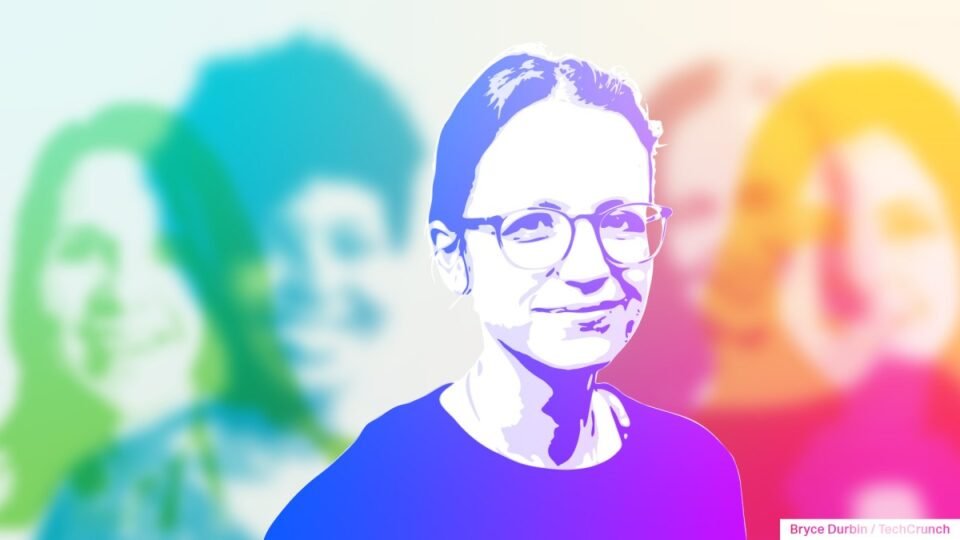

Francine Bennett uses data science to make AI more responsible

To give AI-focused women academics and others their well-deserved — and overdue — time in the spotlight, TechCrunch is launching a series of interviews focusing on remarkable women who’ve contributed to the AI revolution. We’ll publish several pieces throughout the year as the AI boom continues, highlighting key work that often goes unrecognized. Read more profiles here.

Francine Bennett is a founding member of the board at the Ada Lovelace Institute and currently serves as the organization’s interim director. Prior to this, she worked in biotech, using AI to find medical treatments for rare diseases. She also co-founded a data science consultancy and is a founding trustee of DataKind UK, which helps British charities with data science support.

Briefly, how did you get your start in AI? What attracted you to the field?

I started out in pure maths and wasn’t so interested in anything applied — I enjoyed tinkering with computers but thought any applied maths was just calculation and not very intellectually interesting. I came to AI and machine learning later on when it started to become obvious to me and to everyone else that because data was becoming much more abundant in lots of contexts, that opened up exciting possibilities to solve all kinds of problems in new ways using AI and machine learning, and they were much more interesting than I’d realized.

What work are you most proud of in the AI field?

I’m most proud of the work that’s not the most technically elaborate but that unlocks some real improvement for people — for example, using ML to try and find previously unnoticed patterns in patient safety incident reports at a hospital to help the medical professionals improve future patient outcomes. And I’m proud of representing the importance of putting people and society rather than technology at the center at events like this year’s U.K.’s AI Safety Summit. I think it’s only possible to do that with authority because I’ve had experience both working with and being excited by the technology and getting deeply into how it actually affects people’s lives in practice.

How do you navigate the challenges of the male-dominated tech industry and, by extension, the male-dominated AI industry?

Mainly by choosing to work in places and with people who are interested in the person and their skills over the gender and seeking to use what influence I have to make that the norm. Also working within diverse teams whenever I can — being in a balanced team rather than being an exceptional “minority” makes for a really different atmosphere and makes it much more possible for everyone to reach their potential. More broadly, because AI is so multifaceted and is likely to have an impact on so many walks of life, especially on those in marginalized communities, it’s obvious that people from all walks of life need to be involved in building and shaping it, if it’s going to work well.

What advice would you give to women seeking to enter the AI field?

Enjoy it! This is such an interesting, intellectually challenging, and endlessly changing field — you’ll always find something useful and stretching to do, and there are plenty of important applications that nobody’s even thought of yet. Also, don’t be too anxious about needing to know every single technical thing (literally nobody knows every single technical thing) — just start by starting on something you’re intrigued by, and work from there.

What are some of the most pressing issues facing AI as it evolves?

Right now, I think a lack of a shared vision of what we want AI to do for us and what it can and can’t do for us as a society. There’s a lot of technical advancement going on currently, which is likely having very high environmental, financial, and social impacts, and a lot of excitement about rolling out those new technologies without a well-founded understanding of potential risks or unintended consequences. Most of the people building the technology and talking about the risks and consequences are from a pretty narrow demographic. We have a window of opportunity now to decide what we want to see from AI and to work to make that happen. We can think back to other types of technology and how we handled their evolution or what we wish we’d done better — what are our equivalents for AI products of crash-testing new cars; holding liable a restaurant that accidentally gives you food poisoning; consulting impacted people during planning permission; appealing an AI decision as you could a human bureaucracy.

What are some issues AI users should be aware of?

I’d like people who use AI technologies to be confident about what the tools are and what they can do and to talk about what they want from AI. It’s easy to see AI as something unknowable and uncontrollable, but actually, it’s really just a toolset — and I want humans to feel able to take charge of what they do with those tools. But it shouldn’t just be the responsibility of people using the technology — government and industry should be creating conditions so that people who use AI are able to be confident.

What is the best way to responsibly build AI?

We ask this question a lot at the Ada Lovelace Institute, which aims to make data AI work for people and society. It’s a tough one, and there are hundreds of angles you could take, but there are two really big ones from my perspective.

The first is to be willing sometimes not to build or to stop. All the time, we see AI systems with great momentum, where the builders try and add on “guardrails” afterward to mitigate problems and harms but don’t put themselves in a situation where stopping is a possibility.

The second is to really engage with and try and understand how all kinds of people will experience what you’re building. If you can really get into their experiences, then you’ve got way more chance of the positive kind of responsible AI — building something that truly solves a problem for people, based on a shared vision of what good would look like (as well as avoiding the negative), not accidentally making someone’s life worse because their day-to-day existence is just very different from yours.

For example, the Ada Lovelace Institute partnered with the NHS to develop an algorithmic impact assessment that developers should do as a condition of access to healthcare data. This requires developers to assess the possible societal impacts of their AI system before implementation and bring in the lived experiences of people and communities who could be affected.

How can investors better push for responsible AI?

By asking questions about their investments and their possible futures — for this AI system, what does it look like to work brilliantly and be responsible? Where could things go off the rails? What are the potential knock-on effects for people and society? How would we know if we need to stop building or change things significantly, and what would we do then? There’s no one-size-fits-all prescription, but just by asking the questions and signaling that being responsible is important, investors can change where their companies are putting attention and effort.